- Home /

- AI & Automation /

- GenAI Noise to System Failure: Why AI Hiring Is Breaking in 2026

AI & Automation | Tech Talent & Hiring

GenAI Noise to System Failure

Why organizations are failing in AI Hiring in 2026

AI hiring is getting more expensive. But it isn’t getting better. Compensation for engineers working on LLM orchestration and agentic workflows is growing close to 20% faster than traditional stacks. On paper, that should translate into faster adoption and stronger systems.

In practice, it hasn’t. Teams are expanding and roles are being filled, but once projects move beyond prototypes, momentum starts to slow. Systems struggle to scale. Roadmaps stretch. This is not a talent shortage. It’s a calibration problem.

Companies are optimizing for visibility, not viability. Hiring for what looks like AI capability, not what actually holds up in production. And that gap is where most AI initiatives begin to break.

The Market Distortion

The numbers suggest that the AI talent market is working exactly as expected. Compensation is rising. Demand is strong. Hiring cycles are accelerating. On the surface, this looks like a healthy, high-growth ecosystem.

Candidates working on AI-enabled workflows are seeing compensation grow nearly 20% faster than traditional engineering roles. At the same time, roles like MLOps and DevOps are now reaching salary bands once reserved for senior backend or platform engineers.

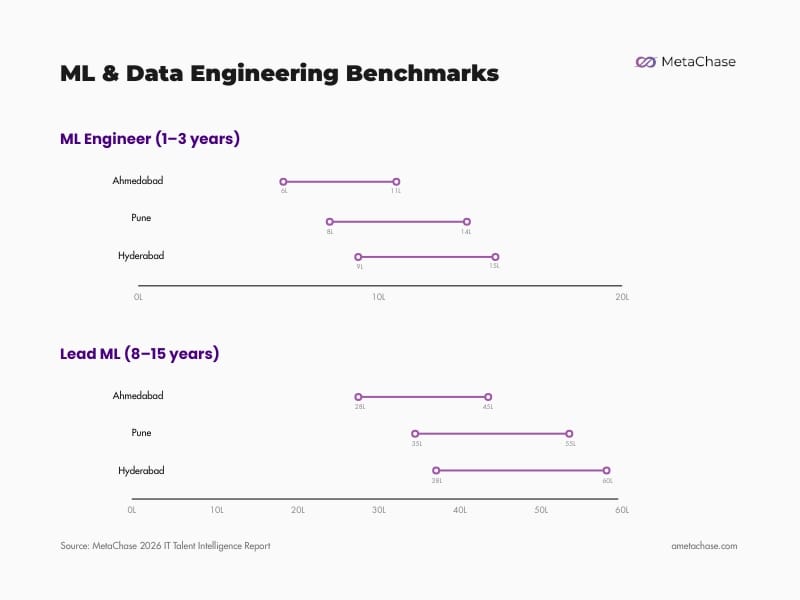

In practical terms, that means a Lead ML Engineer with 8–15 years of experience can command anywhere between ~28L to 60L+, while even early-career ML Engineers in the 1–3 year range are already operating within the 6L to 15L band.

This shift becomes clearer when compared across adjacent roles. Senior Java developers in the 5–7 year range operate within the ~12L to 30L band, while DevOps roles in the same range sit between ~10L to 27L. In contrast, MLOps roles are already pushing into the ~11L to 30L range.

That level of compression between mid-level and senior compensation is not typical of a stable market. It usually points to one thing: compensation is being driven more by perceived relevance than by consistent, production-level output.

This is where the market begins to misalign. The definition of what qualifies as “AI talent” has expanded too quickly. Different roles and levels of depth are being grouped under the same label, even when the actual responsibilities and impact vary significantly.

From the outside, the category appears uniform. Inside teams, the differences surface quickly. And once the market stops distinguishing between experimentation and execution, hiring decisions tend to follow the same pattern.

The Proficiency Mirage

In 2026, “GenAI” on a resume is no longer a differentiator; it is noise.

Most candidates have mastered API Fluency, the ability to connect models and write prompts.

This creates a “Proficiency Mirage”, talent that appears perfect fit during a 45-minute technical interview, may lacks the Architectural Integrity required to sustain a system under real-world load.

The market is currently flooded with “Orchestrators” who can build a prototype in 48 hours but cannot manage the drift, latency, or security of that same system 48 days later.

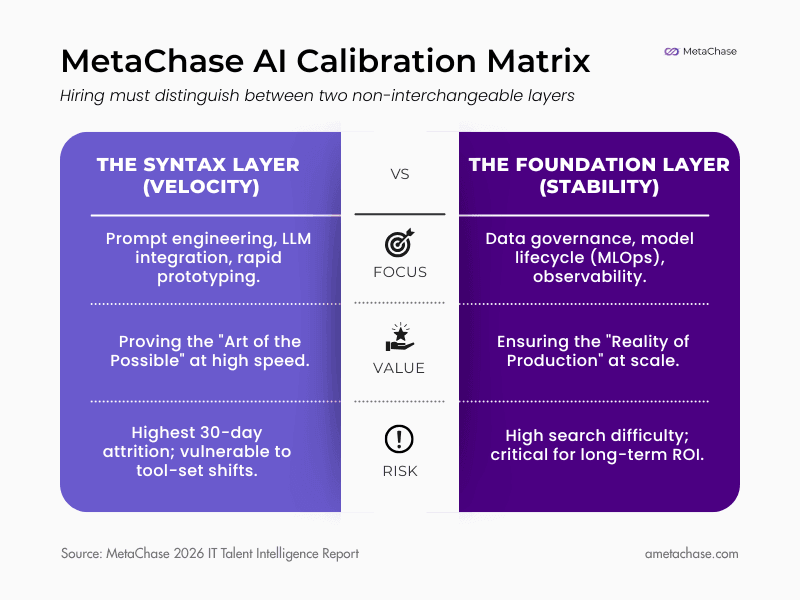

The MetaChase Calibration Matrix

To stop the cycle of failed deployments, hiring must distinguish between two non-interchangeable layers:

The Financial Leak

When you hire “Syntax” talent to do “Foundation” work, the cost is rarely seen in the salary, it is seen in the Technical Debt.

In practice, this leads to systems that perform well in controlled environments but struggle under real-world usage. Fixes become reactive. Dependencies grow. Stability becomes harder to maintain.

Systems built without foundational depth require constant “babysitting” by senior backend engineers, pulling your most expensive assets away from innovation to focus on basic stability. In 2026, this misalignment is the leading cause of AI budget depletion without corresponding revenue growth.

Where Hiring Breaks Down

This is where the impact shows up in hiring.

By the time a role reaches hiring teams, the confusion is already built into the mandate. Job descriptions often try to cover experimentation, system design, deployment, and scale in a single profile. What looks strong on paper is often a mix of incompatible expectations.

- High rejection rates from hiring managers despite strong-looking resumes

- “GenAI” experience used as a filter, but not a reliable indicator of depth

- Candidates perform well in discussions but lack production exposure

- Repeated interview cycles without clear alignment on success criteria

Sourcing continues. Screening expands. Shortlists grow, but closures don’t follow at the same pace. Roles remain open longer, not because talent is unavailable, but because evaluation criteria are not aligned with how the role actually needs to function.

Hiring managers spend more time filtering than selecting. Technical teams get pulled into interviews that don’t convert. Internal confidence in the hiring pipeline starts to drop.

A large share of candidates in the 0–5 year range are actively moving, driven by rapid salary shifts and short tenure cycles. This creates a high-volume pipeline, but not a stable one. So the system keeps moving, but without clarity. And when that happens, hiring becomes reactive. Decisions are made to close roles, not to build teams that will hold over time.

What This Means for Hiring

The Recalibration Gap

Hiring in 2026 is no longer a linear hand-off; it is a synchronized sprint. The primary reason AI initiatives stall isn’t a lack of skill, it is a latency of information.

In high-velocity AI environments, a technical requirement is often a hypothesis that changes after every sprint. If the Talent Acquisition (TA) team is sourcing based on a month-old mandate, they are essentially building a pipeline for a version of the project that no longer exists. This creates a “Lagging Indicator” pipeline: high volume, zero relevance, and massive technical frustration.

The Integration Mandate

To bridge this, we must dismantle the wall between Market Intelligence and Technical Execution.

- The Intelligence Vacuum: Technical leads often architect systems without knowing the current market scarcity for specific niches.

- The Context Vacuum: TA teams often hunt for keywords without understanding why a specific stack was discarded in the last experiment.

True calibration requires the Hiring Manager to act as a market scout and the Recruiter to act as a technical strategist. Success is not “filling the seat”; it is the constant realignment of the JD against both the Market Reality and the Engineering Reality. If these two teams aren’t recalibrating weekly, the hiring process is just expensive guesswork.

The Human Decision Premium

As AI saturates the top-of-funnel automating sourcing, screening, and initial assessments the value of the human handshake has undergone a massive inflation.

In a world where every candidate can use an LLM to generate a “perfect” resume or pass a standard coding test, technical signals are becoming commoditized. The only remaining moat is Judgment.

When everything is labeled as “AI,” distinctions begin to blur. The human-led portion of the interview is no longer about verifying “can they code?” but “can they think?” We are moving from a world of Skill Validation to a world of Character and Context Assessment. The more we use AI to find talent, the more we must rely on human intuition to select it.

Stop sourcing syntax. Start engineering retention.

About the Author

Elvin Vincent

Managing Partner & COO

Elvin is the catalyst behind MetaChase’s operational precision and candidate-centric philosophy. He specializes in decoding complex organizational needs to find the "perfect-fit" talent that others overlook. Approachable yet deeply analytical, Elvin ensures that every "Search" culminates in a transformative hire that fuels long-term enterprise growth.

View Executive Profile